29 Apr 2023

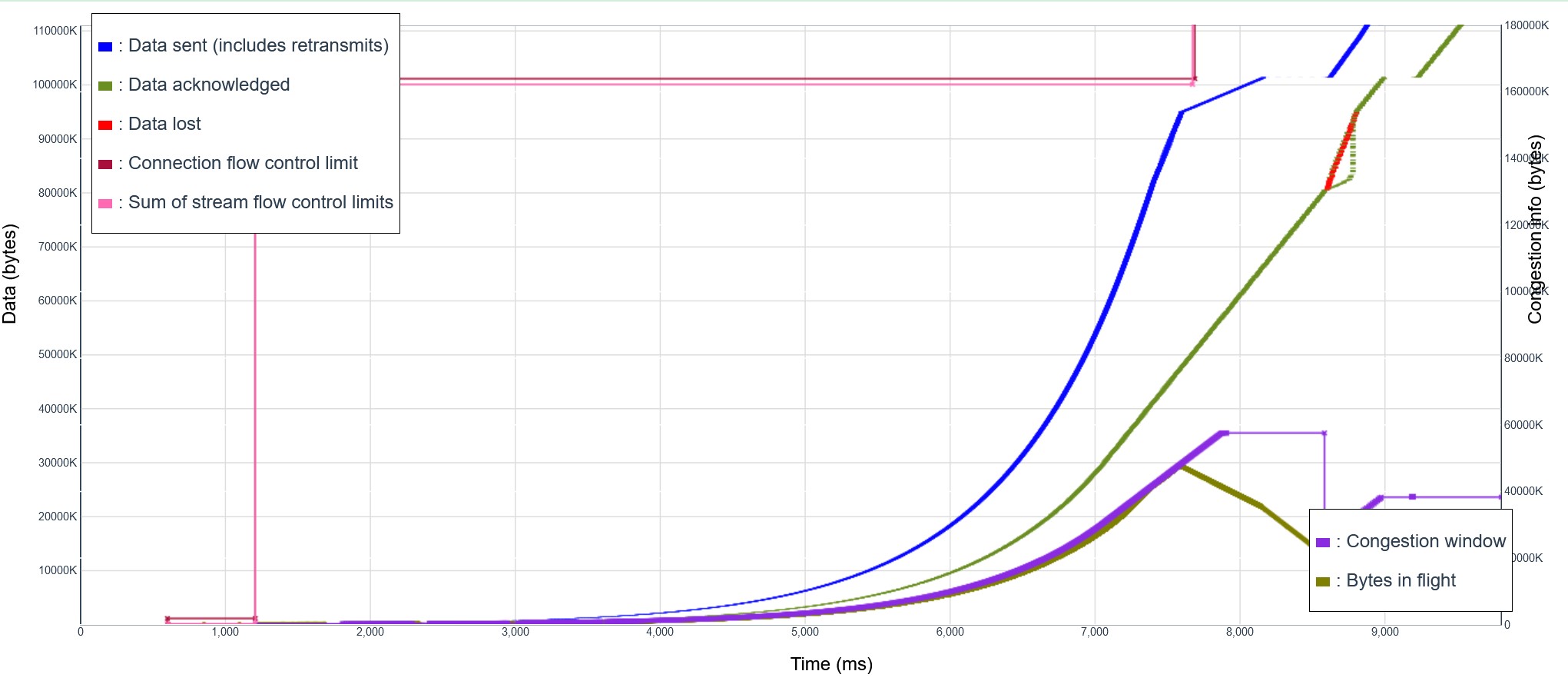

I just implemented in Picoquic the new ACK processing algorithm (proposed for QUIC multipath)[https://github.com/quicwg/multipath/pull/217], which processes ACK independently of the path over which they arrive. It looked good, but there was an interesting regression. The tests that simulated transmission over satellite links were failing. The previous version showed a file transfer concluding in less than 7 seconds, but the with the new version it took about 10 seconds. That was strange, since the only changed was the computation of the round trip time, and the logs showed that both versions computed the same value. To solve that, I had to take a look at traces captured in log files. The traces of the new execution looked reasonable, as shown in this graph:

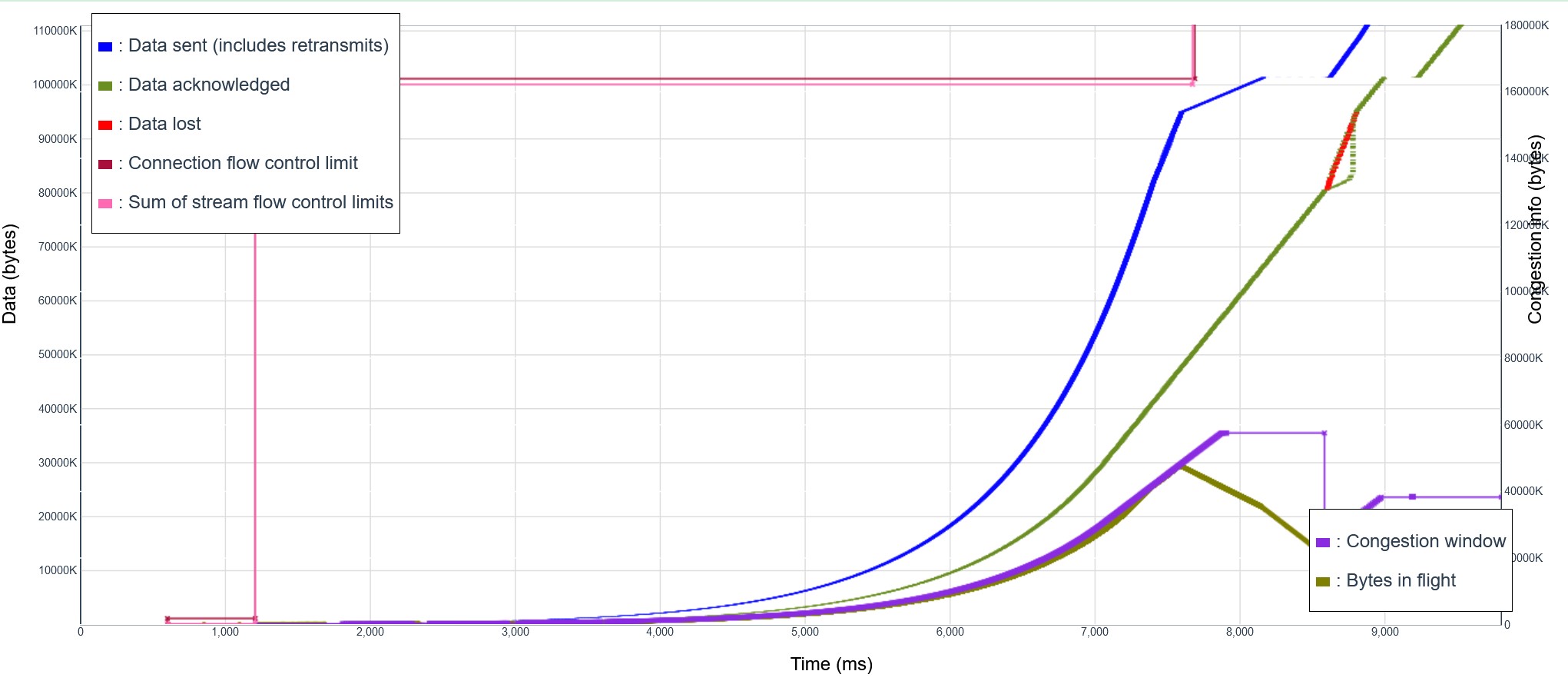

We see the transmission accelerating steadily, just as expected from an implementation of the slow-start algorithm. The curve is very smooth. The congestion window double once per RTT, until it becomes large enough to saturate the simulated link, after about 7.5 seconds. And then there is a tail of transmission, including retransmission of packets sent at the end of the slow start period, for a total duration of almost 10 seconds. But the previous version was actually completing the transfer much faster, as seen in this other graph:

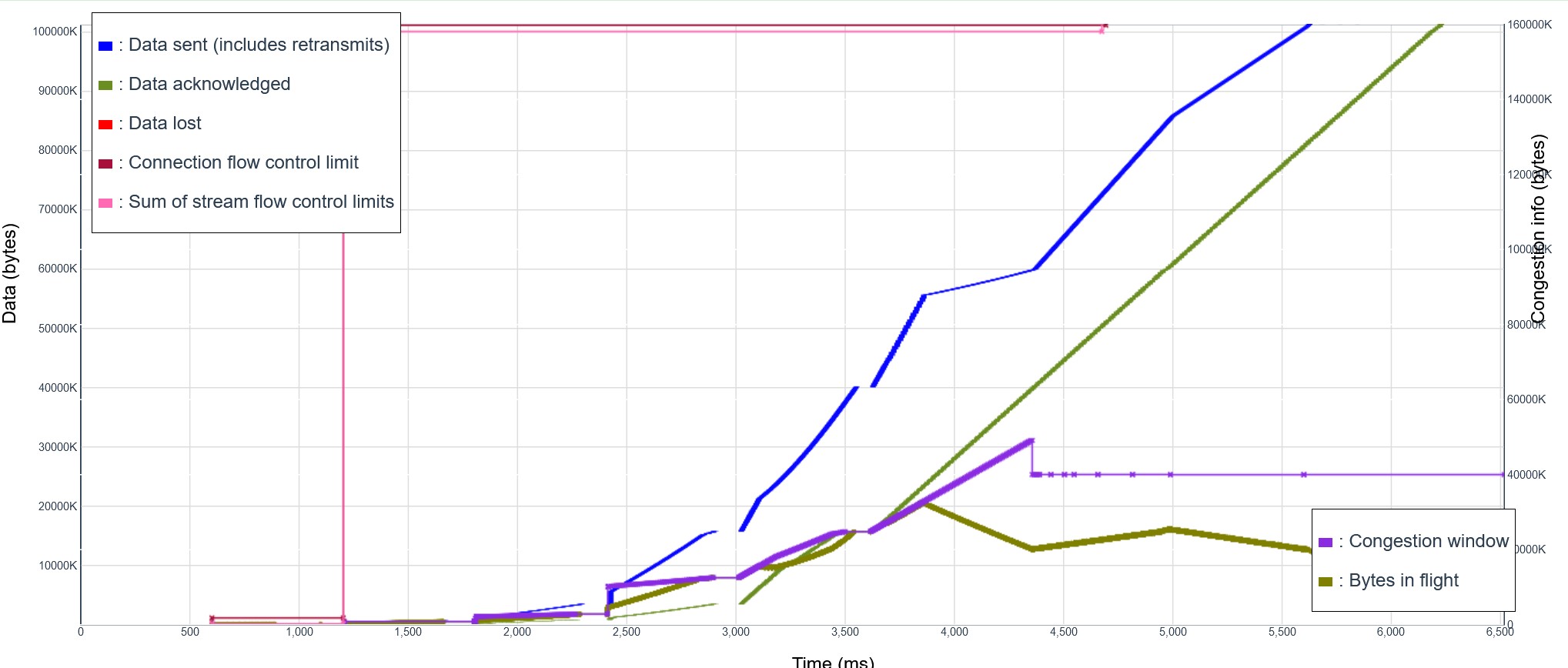

Spot the difference? The old curve was not as smooth. We see a series of segments at progressively higher speed, often starting with a vertical line that indicates many packets sent in quick successions. These packet trains will be received by the other end at close to line speed, and the arrival of ACKs will reflect that speed. Picoquic uses that information to compute an estimate of the path capacity. This allows the congestion window to grow much faster than the regular slow start algorithm, allowing the whole transmission to last fewer than 7 seconds. But what did this not happen in the new variant?

If you compare the very beginning of the two curves, you will notice the small vertical lines at the beginning of each new roundtrip period in the old curve. They are missing in the new curve. It is hard to point the exact cause, but some detail changed, and then the whole behavior changed. That reminded me of the old story about a butterfly flapping its wings on a Pacific Island, and next thing you know there is a typhoon approaching Hawaii. There was no butterfly here, just probably a tiny change in the timing and sequence of packets, but then the connection fell in a pattern where pacing enforce a form of ACK clocking, and the code never had a chance to properly estimate the bandwidth of the path.

I fixed it by forcing a pacing pause if the bandwidth estimation fails during slow start. The transmission only restarts after enough pacing credits have been accumulated for sending a long enough train. With that, the tests do complete in less than 7 seconds. But I am glad that the tests exposed the issue, which was indeed a bug. The butterfly flapping its wing and causing a typhoon is a metaphor of chaotic systems, in which tiny changes can have unforeseen consequences. The code behavior exposed here was chaotic, and that’s not good. Code should be predictable, and behavior should never be left to chance!

If you want to start or join a discussion on this post, the simplest way is to send a toot on the Fediverse/Mastodon to @huitema@social.secret-wg.org.